Art in the Electronic Landscape

Issue 16:2&3 | September 1996

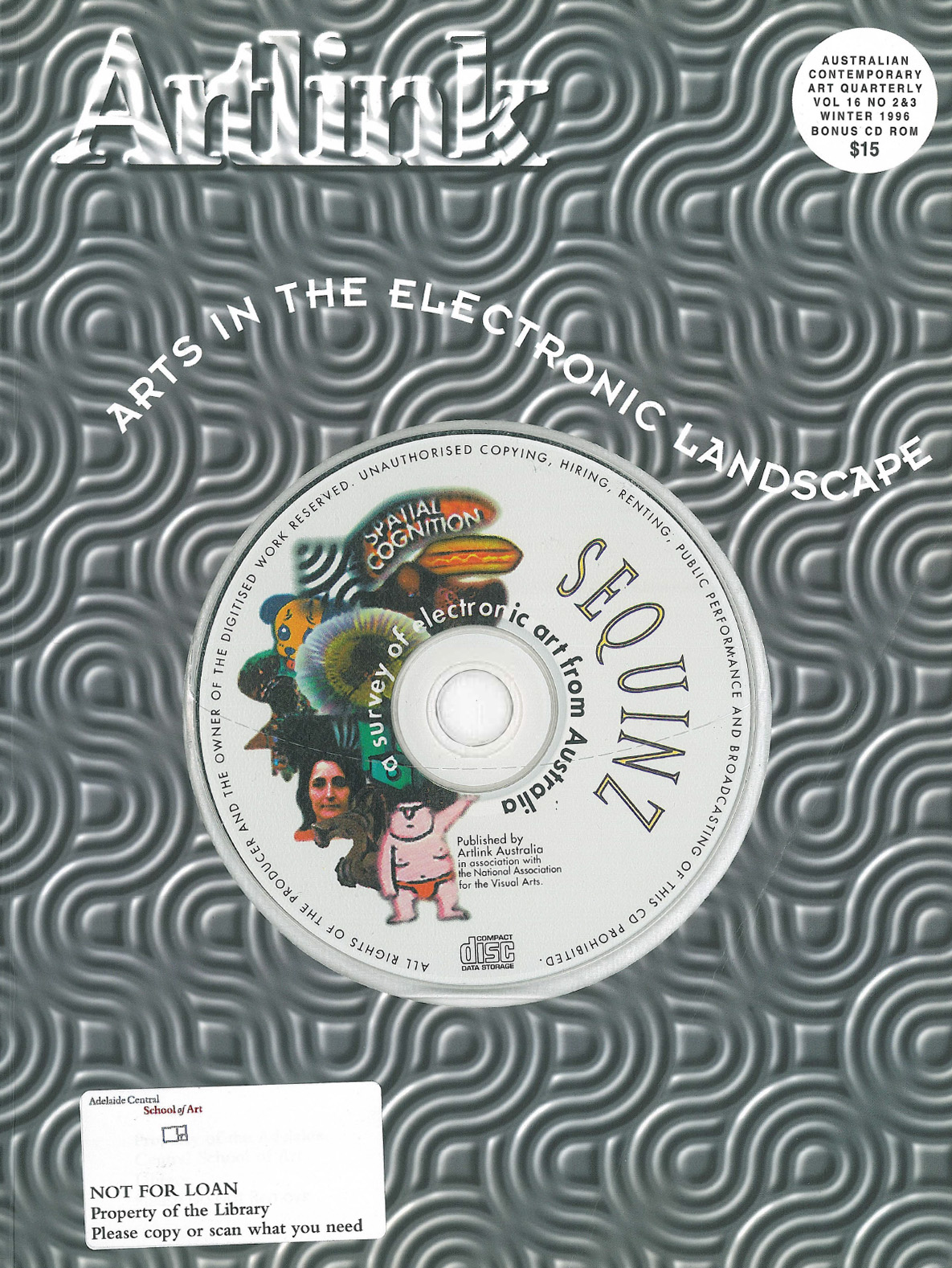

Double issue issued with Artlink's CD Rom Sequinz - a survey of electronic art in Australia (Mac users only). The issue examines multimedia and education, frontiers and challenges, the future and audience interaction. Cutting edge issue, opening up many of the ongoing debates about the impact of the digital world on traditional artistic modes of expression.

In this issue

Counting Digits: Electronic Art will not go away

A witty and wise approach to issues confronting electronic arts by celebrated art theorist and philosopher. He starts by speculating on a contemporary analysis of the first stonecarvings that were not useful directly as tools, but indirectly as symbolic substitutes or stand-ins for some other thing....

Negotiating Artistic Practice in Late Capitalist Techno-Culture

Whatever art is to become in the realm of consumer electronic culture, it is critical that some sort of autonomy be maintained from the pressures of the technological imperative. Otherwise the politic implicit in the technological manifestation will override the necessary anarchic liberty of art.

Emergent Behaviours: Towards computational aesthetics

Overview of the electronic arts moving from the 1960s to the creation of artificial intelligences. Discusses various artists and their projects.

Holography permeates art and commerce? A question of time

One of the strange novelties of running a large format holography business is the regular telephone calls one receives from inspired and inventive people who clearly see holography as representing some kind of magical solution to often relatively ordinary problems.

Spectres of Cyberspace

A new spectre is haunting Western culture- the spectre of virtual reality. For if we are to properly characterise cyberspace as a postmodern phenomenon, it must be as part of a postmodernism that does not come after the modern so much as assertively re-enacts modernismÕs desire to fold back on itself...

Is there art on the Web?

The Web is unknowingly large and diverse. Lists web sites

The Artists Interface 1/0

The interface paradigm is at the core of current work by artists in the area of interactive multimedia. All the works in the exhibition Burning the Interface at the Museum of Contemporary Art, Sydney, approach the issues of interface design and interaction with the audience or user or interactor in different ways.

Landmark Exhibition: Burning the interface

Exhibition review Burning the interface International Artists CD Rom Museum of Contemporary Art Sydney NSW March - July 1996

Interactive Phantasmagoria: Pre-Cinema to Virtuality

Exhibition review Phantasmagoria Curated by Peter Callas and David Watson

Virtual Topographics: an interview with Peter Callas

It is now well accepted that what has been variously dubbed the Ònew mediaÓ, Òelectronic artsÓ, ÒmultimediaÓ, or Òhybrid artsÓ are rapidly changing how we engage with issues of subjectivity, identity, nationality and interactivity.

Living in Second-Natureland: the role of obsession in Technology-based Art.

From a confession of context, the author looks at new definitions where ÔlifeÕ and ÔexperienceÕ are defined as time spent engaging with technology, where ÔspaceÕ and ÔtimeÕ are primary sources for experience; the necessity of obsession and the work of Adrienne Jenik

The Planetary Collegium: art and education in the post biological era

We live in deeply cynical, distrusting and despairing times...The author calls for a Planetary Collegium to advance the electronic media and its related educational process.

I couldnÕt do my homework: the cat ate my mouse

The role of computers in the education of young people looking at examples from student in schools in South Australia.

A Magic Toyshop: an interview with John Bird

John Bird is Associate Professor at the Centre for Animation and Interactive Multi Media at RMIT Melbourne Victoria. Looks at developing technologies and entertainment from a background of this centre for the new media arts.

The Tasmanian Connection

The electronic landscape in Tasmania is only just beginning to be visibly integrated into the exhibition culture...largely influenced by a young generation of artists recently graduated or arrived at the Art School and Conservatorium in Tasmania.

In Search of a Continuum

An article curated by the author whereby four artists were invited to select two of their documentation images and write captions for these images after considering issues of control, motion, space and trigger - issues that span old and new technologies in order to discover whether a sense of a continuum of approach and practice may emerge.

Cyb(erotic) transformations

If the human body is disappearing into technology, then technology too is disappearing into flesh. Not just literally integrating into human skin and bone, but incorporated into our psychology and body imagery, creating a body as cyborg.

Fax me your head (in 3D)

Collaborative article by Dorothy Erickson, Jill Smith, Stephanie Britton and Phil Dench. The process of scanning in 2D images of manipulating and combining them in electronic paintboxes and of printing the results is familiar to many artists. Techniques are now being developed that will allow us to do the same thing with three dimensional objects.

Computers, machines, mathematics.

There are 2 specific problems attached to creating 3D computer art. One is funding and the other is often the need for expert and professional assistance. Examines the issues faced by artists working in the area of 3D computer art.

Nigel HelyerÕs Silent Forest

An installation by Nigel Helyer at the Walter McBean Gallery San Francisco Art Institute as part of Soundculture, San Francisco, April 1996.

Skadada@pica

Skadada at the Perth Institute of Contemporary Art was an instant success when it was performed in September 1995. It is a dance, music and computer hybrid art performance with works by Katie Lavers, Jon Burtt and John Patterson using innovative MIDI technology with computer graphics, video and manipulated sound keyed in by a live performer.

Waiting for the CyberMuse

Moral: Having the tools available doesnÕt necessarily generate artistic ideas but when the idea is there, the artist will move heaven and earth to execute it.

Bursting with opinions

Book review Critical issues in electronic media Edited by Simon Penny State University of New York Press, USA 1995

CMCs Open for business

Looks at the role of CMCs Cooperative Multimedia Centres which are rapidly spreading across Australia. CMCs are government seeded consortia of a range of share holders and stake holders, designed to develop and support local and national multimedia industries. They all have a tripartite brief: education and training; research and development; and industry support...

Electronic art in Australia: do we have critical mass?

Looks at art practice as it has developed since 1987 using the ARTLINK issue in 1987 of ÔArt and TechnologyÕ-(Vol 7 Nos 2 & 3, 1987) as the starting point for what was at that time the mostly unrecognised relationship between art and technology.

Cyber Cultures in Western Sydney

Article with co-curator David Cranswick , Cyber Cultures at the Performance Space Gallery is an on-going exhibition and performance project initiated by Street Level an artist run initiative in Western Sydney.

Electronic Media Collections

Looks at the collection of electronic artworks held by the Griffith University Queensland.

Perth Science Centre employs artists

Opened in August 1988, Perths Scitech Discovery Centre aimed to add a ÔfunÕ environment to the physical and human sciences, with hands-on interactions and experiences. From its inception it employed design graduates to design and make many of its displays.

Playing with the Planet

Digital Arts Film and Television is developing an interactive game based around the filme Epsilon, which award winning director Rolf de Heer produced with Digital Arts in 1995. Producer Sean Caddy previews this most unusual computer game, which has no violence and is a chilling scenario of the crisis in the ecology of planet Earth.

The Virtual Museum

Looks at Compact Disc Interactive or CDi which allows people to chart their own personal pathways through material.

Negotiating the Museum of Sydney

Review of the new Museum of Sydney whose challenge it was to compose a cultural metaphor for Sydney. It was conceived as a site that lets the visitor experience the jostling versions of what was going on, what it looked, sounded and behaved like.

Open Market: Computer art in China

ÒFrom herbs and opium to Amway and Coca-cola. The market opens and the game starts.Ó

Loudscreen: Animation network

In an expressive sense animation is as personal a medium as writing. New technologies have the potential to build on this trend...

MM in Queensland education

Multi media education requires a wide range of resources. In Queensland the Bachelor of Multimedia at Griffith University draws on the expertise of 5 faculties and colleges

Arts Queensland

In March 1996, Arts Queensland presented Giles Consulting report on the state of play in multi media to a packed public forum at the Museum of Modern Art. Outlines the recommendations.